Web page content

We have three variants of crisp reference alignments (the confidence values for all matches are 1.0). They contain 21 alignments (test cases), which corresponds to the complete alignment space between 7 ontologies from the OntoFarm data set. This is a subset of all ontologies within this track (16) [4], see OntoFarm data set web page.

We provide three evaluation variants for each reference alignment

rar2 M3 is used as the main reference alignment for this year. It will also be used within the synthesis paper.

| ra1 | ra2 | rar2 | |

| M1 | ra1-M1 | ra2-M1 | rar2-M1 |

| M2 | ra1-M2 | ra2-M2 | rar2-M2 |

| M3 | ra1-M3 | ra2-M3 | rar2-M3 |

Regarding evaluation based on reference alignment, we first filtered out (from the generated alignments) all instance-to-any_entity and owl:Thing-to-any_entity correspondences prior to computing Precision/Recall/F1-measure/F2-measure/F0.5-measure because they are not contained in the reference alignment. In order to compute average Precision and Recall over all those alignments, we used absolute scores (i.e. we computed precision and recall using absolute scores of TP, FP, and FN across all 21 test cases). This corresponds to micro average precision and recall. Therefore, the resulting numbers can slightly differ with those computed by the SEALS platform (macro average precision and recall). Then, we computed F1-measure in a standard way. Finally, we found the highest average F1-measure with thresholding (if possible).

In order to provide some context for understanding matchers performance, we included two simple string-based matchers as baselines. StringEquiv (it was called Baseline1 before) is a string matcher based on string equality applied on local names of entities which were lowercased before (this baseline was also used within anatomy track 2012) and edna (string editing distance matcher) was adopted from benchmark track (wrt. performance it is very similar to the previously used baseline2).

In the tables below, there are results of all 11 tools with regard to all combinations of evaluation variants with crisp reference alignments. There are precision, recall, F1-measure, F2-measure and F0.5-measure computed for the threshold that provides the highest average F1-measure computed for each matcher. F1-measure is the harmonic mean of precision and recall. F2-measure (for beta=2) weights recall higher than precision and F0.5-measure (for beta=0.5) weights precision higher than recall.

| Matcher | Threshold | Precision | F.5-measure | F1-measure | F2-measure | Recall |

|---|---|---|---|---|---|---|

| TOMATO | 0.0 | 0.73 | 0.69 | 0.63 | 0.59 | 0.56 |

| StringEquiv | 0.0 | 0.88 | 0.76 | 0.64 | 0.55 | 0.5 |

| Matcha | 0.0 | 0.76 | 0.74 | 0.72 | 0.69 | 0.68 |

| SORBETMtch | 0.0 | 0.78 | 0.77 | 0.76 | 0.76 | 0.75 |

| LogMapLt | 0.0 | 0.84 | 0.76 | 0.66 | 0.58 | 0.54 |

| LSMatch | 0.0 | 0.88 | 0.76 | 0.64 | 0.55 | 0.5 |

| OLaLa | 0.0 | 0.66 | 0.67 | 0.69 | 0.71 | 0.73 |

| AMD | 0.0 | 0.87 | 0.76 | 0.64 | 0.55 | 0.5 |

| ALIN | 0.0 | 0.88 | 0.79 | 0.68 | 0.59 | 0.55 |

| PropMatch | ||||||

| LogMap | 0.0 | 0.84 | 0.79 | 0.72 | 0.66 | 0.63 |

| edna | 0.0 | 0.88 | 0.78 | 0.67 | 0.59 | 0.54 |

| GraphMatcher | 0.0 | 0.78 | 0.8 | 0.83 | 0.87 | 0.89 |

| Matcher | Threshold | Precision | F.5-measure | F1-measure | F2-measure | Recall |

|---|---|---|---|---|---|---|

| TOMATO | 0.0 | 0.17 | 0.18 | 0.18 | 0.19 | 0.2 |

| StringEquiv | 0.0 | 0.07 | 0.05 | 0.03 | 0.02 | 0.02 |

| Matcha | 0.78 | 0.48 | 0.48 | 0.47 | 0.46 | 0.46 |

| SORBETMtch | ||||||

| LogMapLt | 0.0 | 0.24 | 0.24 | 0.23 | 0.22 | 0.22 |

| LSMatch | ||||||

| OLaLa | 0.76 | 0.39 | 0.35 | 0.3 | 0.26 | 0.24 |

| AMD | ||||||

| ALIN | ||||||

| PropMatch | 0.0 | 0.83 | 0.74 | 0.64 | 0.56 | 0.52 |

| LogMap | 0.79 | 0.62 | 0.5 | 0.39 | 0.31 | 0.28 |

| edna | 0.0 | 0.21 | 0.18 | 0.14 | 0.12 | 0.11 |

| GraphMatcher | 0.91 | 0.72 | 0.55 | 0.4 | 0.32 | 0.28 |

| Matcher | Threshold | Precision | F.5-measure | F1-measure | F2-measure | Recall |

|---|---|---|---|---|---|---|

| TOMATO | 0.0 | 0.61 | 0.58 | 0.55 | 0.52 | 0.5 |

| StringEquiv | 0.0 | 0.8 | 0.68 | 0.56 | 0.47 | 0.43 |

| Matcha | 0.66 | 0.7 | 0.69 | 0.67 | 0.65 | 0.64 |

| SORBETMtch | 0.0 | 0.78 | 0.75 | 0.7 | 0.66 | 0.64 |

| LogMapLt | 0.0 | 0.73 | 0.67 | 0.59 | 0.53 | 0.5 |

| LSMatch | 0.0 | 0.88 | 0.72 | 0.57 | 0.47 | 0.42 |

| OLaLa | 0.0 | 0.56 | 0.58 | 0.61 | 0.64 | 0.66 |

| AMD | 0.0 | 0.87 | 0.72 | 0.58 | 0.48 | 0.43 |

| ALIN | 0.0 | 0.88 | 0.75 | 0.61 | 0.52 | 0.47 |

| PropMatch | 0.0 | 0.83 | 0.29 | 0.15 | 0.1 | 0.08 |

| LogMap | 0.0 | 0.81 | 0.75 | 0.68 | 0.61 | 0.58 |

| edna | 0.0 | 0.79 | 0.7 | 0.59 | 0.51 | 0.47 |

| GraphMatcher | 0.0 | 0.76 | 0.77 | 0.78 | 0.79 | 0.8 |

| Matcher | Threshold | Precision | F.5-measure | F1-measure | F2-measure | Recall |

|---|---|---|---|---|---|---|

| TOMATO | 0.0 | 0.69 | 0.64 | 0.59 | 0.54 | 0.51 |

| StringEquiv | 0.0 | 0.83 | 0.71 | 0.58 | 0.5 | 0.45 |

| Matcha | 0.0 | 0.71 | 0.69 | 0.66 | 0.64 | 0.62 |

| SORBETMtch | 0.0 | 0.75 | 0.74 | 0.72 | 0.71 | 0.7 |

| LogMapLt | 0.0 | 0.79 | 0.7 | 0.6 | 0.53 | 0.49 |

| LSMatch | 0.0 | 0.83 | 0.71 | 0.58 | 0.5 | 0.45 |

| OLaLa | 0.0 | 0.64 | 0.65 | 0.66 | 0.67 | 0.68 |

| AMD | 0.0 | 0.82 | 0.71 | 0.59 | 0.5 | 0.46 |

| ALIN | 0.0 | 0.82 | 0.73 | 0.62 | 0.54 | 0.5 |

| PropMatch | ||||||

| LogMap | 0.0 | 0.79 | 0.73 | 0.66 | 0.6 | 0.57 |

| edna | 0.0 | 0.82 | 0.72 | 0.61 | 0.52 | 0.48 |

| GraphMatcher | 0.0 | 0.74 | 0.75 | 0.78 | 0.8 | 0.82 |

| Matcher | Threshold | Precision | F.5-measure | F1-measure | F2-measure | Recall |

|---|---|---|---|---|---|---|

| TOMATO | 0.0 | 0.15 | 0.15 | 0.16 | 0.17 | 0.17 |

| StringEquiv | 0.0 | 0.07 | 0.05 | 0.03 | 0.02 | 0.02 |

| Matcha | 0.78 | 0.48 | 0.48 | 0.47 | 0.46 | 0.46 |

| SORBETMtch | ||||||

| LogMapLt | 0.0 | 0.24 | 0.24 | 0.23 | 0.22 | 0.22 |

| LSMatch | ||||||

| OLaLa | 0.76 | 0.39 | 0.35 | 0.3 | 0.26 | 0.24 |

| AMD | ||||||

| ALIN | ||||||

| PropMatch | 0.0 | 0.86 | 0.77 | 0.66 | 0.58 | 0.54 |

| LogMap | 0.79 | 0.62 | 0.5 | 0.39 | 0.31 | 0.28 |

| edna | 0.0 | 0.21 | 0.18 | 0.14 | 0.12 | 0.11 |

| GraphMatcher | 0.91 | 0.72 | 0.55 | 0.4 | 0.32 | 0.28 |

| Matcher | Threshold | Precision | F.5-measure | F1-measure | F2-measure | Recall |

|---|---|---|---|---|---|---|

| TOMATO | 0.0 | 0.57 | 0.54 | 0.51 | 0.48 | 0.46 |

| StringEquiv | 0.0 | 0.76 | 0.64 | 0.52 | 0.43 | 0.39 |

| Matcha | 0.65 | 0.65 | 0.64 | 0.62 | 0.6 | 0.59 |

| SORBETMtch | 0.0 | 0.75 | 0.71 | 0.66 | 0.62 | 0.59 |

| LogMapLt | 0.0 | 0.68 | 0.62 | 0.54 | 0.48 | 0.45 |

| LSMatch | 0.0 | 0.83 | 0.68 | 0.53 | 0.44 | 0.39 |

| OLaLa | 0.57 | 0.59 | 0.59 | 0.59 | 0.59 | 0.59 |

| AMD | 0.0 | 0.82 | 0.67 | 0.53 | 0.44 | 0.39 |

| ALIN | 0.0 | 0.82 | 0.69 | 0.56 | 0.48 | 0.43 |

| PropMatch | 0.0 | 0.86 | 0.29 | 0.15 | 0.1 | 0.08 |

| LogMap | 0.0 | 0.77 | 0.71 | 0.63 | 0.57 | 0.53 |

| edna | 0.0 | 0.74 | 0.65 | 0.54 | 0.47 | 0.43 |

| GraphMatcher | 0.0 | 0.73 | 0.73 | 0.73 | 0.74 | 0.74 |

| Matcher | Threshold | Precision | F.5-measure | F1-measure | F2-measure | Recall |

|---|---|---|---|---|---|---|

| TOMATO | 0.0 | 0.68 | 0.64 | 0.6 | 0.55 | 0.53 |

| StringEquiv | 0.0 | 0.83 | 0.72 | 0.61 | 0.52 | 0.48 |

| Matcha | 0.66 | 0.71 | 0.69 | 0.67 | 0.64 | 0.63 |

| SORBETMtch | 0.0 | 0.73 | 0.73 | 0.72 | 0.71 | 0.71 |

| LogMapLt | 0.0 | 0.78 | 0.71 | 0.62 | 0.56 | 0.52 |

| LSMatch | 0.0 | 0.83 | 0.72 | 0.61 | 0.52 | 0.48 |

| OLaLa | 0.0 | 0.62 | 0.63 | 0.66 | 0.68 | 0.7 |

| AMD | 0.0 | 0.82 | 0.72 | 0.61 | 0.52 | 0.48 |

| ALIN | 0.0 | 0.82 | 0.74 | 0.64 | 0.56 | 0.52 |

| PropMatch | ||||||

| LogMap | 0.0 | 0.78 | 0.74 | 0.68 | 0.63 | 0.6 |

| edna | 0.0 | 0.82 | 0.73 | 0.63 | 0.55 | 0.51 |

| GraphMatcher | 0.0 | 0.73 | 0.75 | 0.79 | 0.82 | 0.85 |

| Matcher | Threshold | Precision | F.5-measure | F1-measure | F2-measure | Recall |

|---|---|---|---|---|---|---|

| TOMATO | 0.0 | 0.15 | 0.16 | 0.16 | 0.17 | 0.18 |

| StringEquiv | 0.0 | 0.07 | 0.05 | 0.03 | 0.02 | 0.02 |

| Matcha | 0.93 | 0.67 | 0.57 | 0.47 | 0.4 | 0.36 |

| SORBETMtch | ||||||

| LogMapLt | 0.0 | 0.24 | 0.24 | 0.23 | 0.22 | 0.22 |

| LSMatch | ||||||

| OLaLa | 0.76 | 0.39 | 0.35 | 0.3 | 0.26 | 0.24 |

| AMD | ||||||

| ALIN | ||||||

| PropMatch | 0.0 | 0.86 | 0.78 | 0.68 | 0.6 | 0.56 |

| LogMap | 0.79 | 0.62 | 0.51 | 0.4 | 0.32 | 0.29 |

| edna | 0.0 | 0.21 | 0.18 | 0.14 | 0.12 | 0.11 |

| GraphMatcher | 0.91 | 0.72 | 0.56 | 0.41 | 0.33 | 0.29 |

| Matcher | Threshold | Precision | F.5-measure | F1-measure | F2-measure | Recall |

|---|---|---|---|---|---|---|

| TOMATO | 0.0 | 0.57 | 0.55 | 0.52 | 0.49 | 0.47 |

| StringEquiv | 0.0 | 0.76 | 0.65 | 0.53 | 0.45 | 0.41 |

| Matcha | 0.0 | 0.62 | 0.62 | 0.62 | 0.62 | 0.62 |

| SORBETMtch | 0.0 | 0.73 | 0.7 | 0.66 | 0.63 | 0.61 |

| LogMapLt | 0.0 | 0.68 | 0.62 | 0.56 | 0.5 | 0.47 |

| LSMatch | 0.0 | 0.83 | 0.69 | 0.55 | 0.46 | 0.41 |

| OLaLa | 0.58 | 0.59 | 0.59 | 0.6 | 0.61 | 0.61 |

| AMD | 0.0 | 0.82 | 0.68 | 0.55 | 0.46 | 0.41 |

| ALIN | 0.0 | 0.82 | 0.7 | 0.57 | 0.48 | 0.44 |

| PropMatch | 0.0 | 0.86 | 0.29 | 0.15 | 0.1 | 0.08 |

| LogMap | 0.0 | 0.76 | 0.71 | 0.64 | 0.59 | 0.56 |

| edna | 0.0 | 0.74 | 0.66 | 0.56 | 0.49 | 0.45 |

| GraphMatcher | 0.0 | 0.71 | 0.72 | 0.74 | 0.76 | 0.77 |

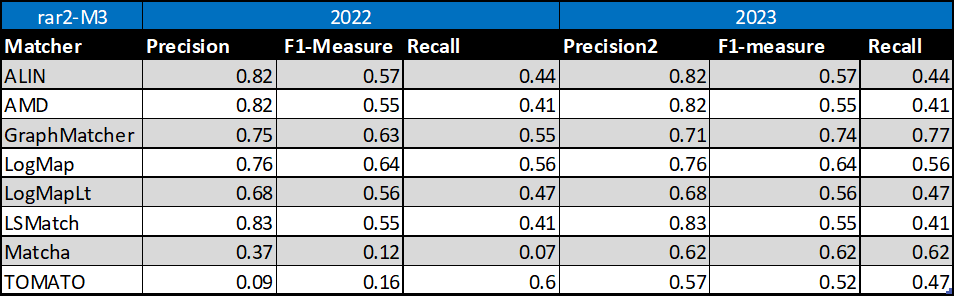

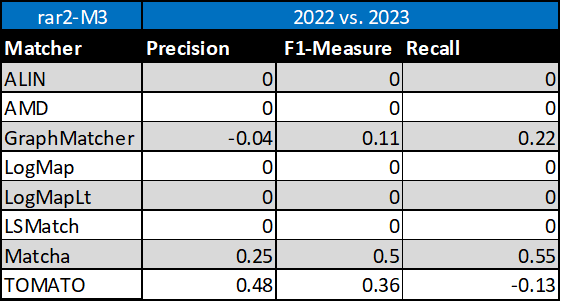

Table below summarizes performance results of eight tools that participated in the last 2 years of OAEI Conference track with regard to reference alignment rar2.

Based on this evaluation, we can see that five of the matching tools (ALIN, AMD, LogMap, LogMapLt, LSMatch) did not change the results. GraphMatcher slightly decreased its precision but increased its F1-measure and recall. Similarly, TOMATO decreased its recall but increased its precision and F1-measure. Matcha increased all three, precision, F1-measure and recall.

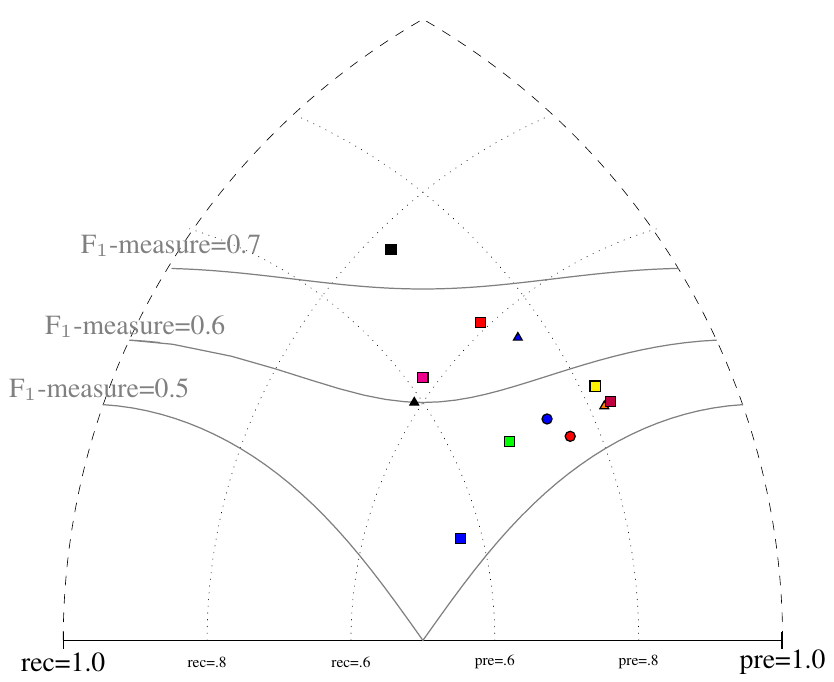

All tools are visualized in terms of their performance regarding an average F1-measure in the figure below. Tools are represented as squares or triangles. Baselines are represented as circles. Horizontal line depicts level of precision/recall while values of average F1-measure are depicted by areas bordered by corresponding lines F1-measure=0.[5|6|7].

Based on the evaluation variants M1 and M2, four matchers (ALIN, AMD, LSMatch, and SORBETMtch) do not match properties at all. On the other side, PropMatch does not match classes at all, while it dominates in matching properties. Naturally, this has a negative effect on the overall tools performance within the M3 evaluation variant.

[1] Michelle Cheatham, Pascal Hitzler: Conference v2.0: An Uncertain Version of the OAEI Conference Benchmark. International Semantic Web Conference (2) 2014: 33-48.

[2] Alessandro Solimando, Ernesto Jiménez-Ruiz, Giovanna Guerrini: Detecting and Correcting Conservativity Principle Violations in Ontology-to-Ontology Mappings. International Semantic Web Conference (2) 2014: 1-16.

[3] Alessandro Solimando, Ernesto Jiménez-Ruiz, Giovanna Guerrini: A Multi-strategy Approach for Detecting and Correcting Conservativity Principle Violations in Ontology Alignments. OWL: Experiences and Directions Workshop 2014 (OWLED 2014). 13-24.

[4] Ondřej Zamazal, Vojtěch Svátek. The Ten-Year OntoFarm and its Fertilization within the Onto-Sphere. Web Semantics: Science, Services and Agents on the World Wide Web, 43, 46-53. 2018.