In this page, we report the results of the OAEI 2016 campaign for the MultiFarm track. The details on this data set can be found at the MultiFarm web page.

If you notice any kind of error (wrong numbers, incorrect information on a matching system, etc.) do not hesitate to contact us (for the mail see below in the last paragraph on this page).

This year we have conducted a minimalistic evaluation and focused on the blind data set. This data set includes the matching tasks involving the edas and ekaw ontologies (resulting in 55 x 24 tasks). Participants were able to test their systems on the open subset of tasks, available via the SEALS repository. The open subset counts on 45 x 25 tasks and it does not include Italian translations.

We distinguish two types of matching tasks :

For the tasks of type (ii), good results are not directly related to the use of specific techniques for dealing with cross-lingual ontologies, but on the ability to exploit the fact that both ontologies have an identical structure.

This year, 7 systems (out of 23) have implemented cross-lingual matching strategies : AML, CroLOM-Lite, IOMAP (renamed SimCat-Lite), LogMap, LPHOM, LYAM++, and XMAP. Among these systems, only CroLOM-Lite and SimCat-Lite are specifically designed to this task.

The number of participants in fact increased with respect to the last campaign (5 in 2015, 3 in 2014, 7 in 2013, and 7 in 2012). For sake of simplicity, we refer in the following to cross-lingual systems those implementing cross-lingual matching strategies and non-cross-lingual systems those without that feature. The reader can refer to the OAEI papers for a detailed description of the strategies adopted by each system.

Following the OAEI evaluation rules, ALL systems should be evaluated in ALL tracks although it is expected that some system produce bad or no results. For this track, we observed different behaviors:

In the following, we report the results for the systems dedicated to the task or that have been able to provide non-empty alignments for some tasks. We count on 12 systems (out of 23 participants).

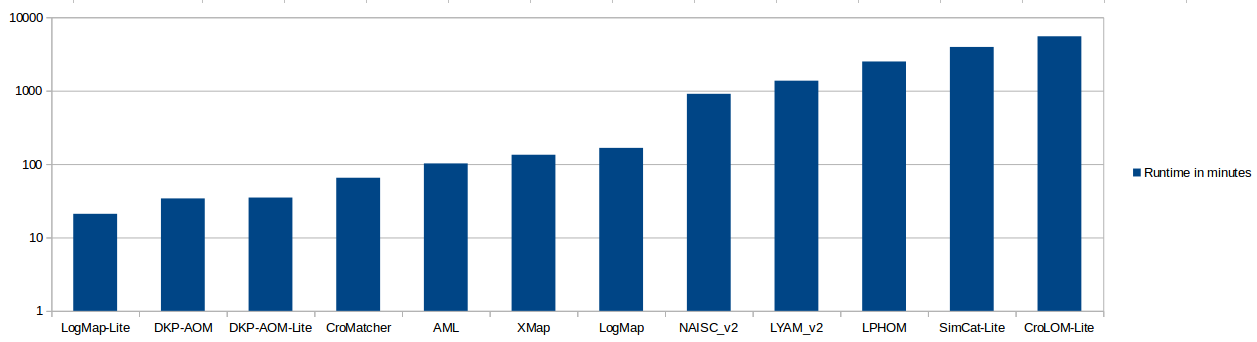

The systems have been executed on a Ubuntu Linux machine configured with 8GB of RAM running under a Intel Core CPU 2.00GHz x4 processors. All measurements are based on a single run. As below, we can observe large differences in the time required for a system to complete the 55 x 24 matching tasks. Note as well that the concurrent access to the SEALS repositories during the evaluation period may have an impact in the time required for completing the tasks.

The table below presents the aggregated results for the matching tasks involving edas and ekaw ontologies. They have been computed using the Alignment API 4.6 and can slightly differ from those computed with the SEALS client. We haven't applied any threshold on the results. They are measured in terms of classical precision and recall (future evaluations should include weighted and semantic metrics).

For both types of tasks, most systems favor precision to the detriment of recall. The exception is LPHOM that has generated huge sets of correspondences (together with LYAM). As expected, (most) systems implementing cross-lingual techniques outperform the non-cross-lingual systems (the exceptions are LPHOM, LYAM and XMap, which have low performance for different reasons, i.e., many internal exceptions or poor ability to deal with the specificities of the task). On the other hand, this year, many non-cross-lingual systems dealing with matching at schema level have been executed with errors (CroMatcher, GA4OM) or were not able to deal at with the tasks (Alin, Lily, NAISC). Hence, their structural strategies could not be in fact evaluated (tasks of type ii). For both tasks, DKP-AOM, DKP-AOM-Lite have good performance in terms of precision but generating few correspondences for less than half of the matching tasks.

In particular, for the tasks of type (i), AML outperforms all other systems in terms of F-measure, followed by LogMap, CroLOM-Lite and SimCat-Lite. However, LogMap outperforms all systems in terms of precision, keeping a relatively good performance in terms of recall. For tasks of type (ii), AML decreases in performance with LogMap keeping its good results and outperforming all systems, followed by CroLOM-Lite and SimCat-Lite.

| Different ontologies (i) | Same ontologies (ii) | |||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| System | Time | #pairs | Size | Prec. | F-m. | Rec. | Size | Prec. | F-m. | Rec. | ||||||||||||||||||||||||||

| AML | 102 | 55 | 13.45 | .51(.51) | .45(.45) | .40(.40) | 39.99 | .92(.92) | .31(.31) | .19(.19) | ||||||||||||||||||||||||||

| CroLOM-Lite | 5501 | 55 | 8.56 | .55(.55) | .36(.36) | .28(.28) | 38.76 | .89(.90) | .40(.40) | .26(.26) | ||||||||||||||||||||||||||

| LogMap | 166 | 55 | 7.27 | .71(.71) | .37(.37) | .26(.26) | 52.81 | .96(.96) | .44(.44) | .30(.30) | ||||||||||||||||||||||||||

| LPHOM | 2497 | 34 | 84.22 | .01(.02) | .02(.04) | .08(.08) | 127.91 | .13(.22) | .13(.21) | .13(.13) | ||||||||||||||||||||||||||

| LYAM | 1367 | 24 | 177.30 | .008(.002) | .006(.01) | .002(.002) | 283.95 | .03(.07) | .02(.07) | .03(.03) | ||||||||||||||||||||||||||

| SimCat-Lite | 3938 | 54 | 7.07 | .59(.60) | .34(.35) | .25(.25) | 30.11 | .90(.93) | .33(.34) | .21(.21) | ||||||||||||||||||||||||||

| XMap | 134 | 31 | 3.93 | .30(.54) | .007(.01) | .003(.003) | 0.0 | .00(.00) | .00(.00) | .00(.00) | ||||||||||||||||||||||||||

| CroMatcher | 65 | 25 | 2.91 | .29(.64) | .004(.01) | .002(.002) | 0.0 | .00(.00) | .00(.00) | .00(.00) | ||||||||||||||||||||||||||

| DKP-AOM | 34 | 24 | 2.58 | .42(.98) | .03(.08) | .02(.02) | 4.37 | .49(1.0) | .01(.03) | .007(0.07) | ||||||||||||||||||||||||||

| DKP-AOM-Lite | 35 | 24 | 2.58 | .42(.98) | .03(.08) | .02(.02) | 4.37 | .49(1.0) | .01(.03) | .007(.007) | ||||||||||||||||||||||||||

| LogMapLite | 21 | 55 | 1.16 | .35(.35) | .04(.09) | .02(.02) | 94.50 | .01(.01) | .01(.01) | .008(.008) | ||||||||||||||||||||||||||

| NAISC | 905 | 55 | 1.94 | .002(.002) | .00(.006) | .00(.00) | 1.84 | .01(.01) | .00(.01) | .00(.01) | ||||||||||||||||||||||||||

| Cross-lingual systems | Non-specific systems | |||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| AML | CroLOM-Lite | LogMap | LPHOM | LYAM | SimCat-Lite | XMap | CroMatcher | DKP-AOM | DKP-AOM-Lite | LogMap-Lite | NAISC | |||||||||||||||||||||||||

| ar-cn | 0.38 | 0.23 | 0.17 | 0.41 | 0.18 | 0.11 | 0.62 | 0.19 | 0.11 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.40 | 0.12 | 0.07 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| ar-cz | 0.47 | 0.40 | 0.36 | 0.65 | 0.36 | 0.25 | 0.72 | 0.40 | 0.28 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.67 | 0.28 | 0.17 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| ar-de | 0.44 | 0.38 | 0.34 | 0.60 | 0.37 | 0.26 | 0.73 | 0.37 | 0.25 | 0.08 | 0.13 | 0.38 | NaN | NaN | 0.00 | 0.63 | 0.29 | 0.19 | 0.00 | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| ar-en | 0.56 | 0.44 | 0.37 | 0.64 | 0.40 | 0.29 | 0.73 | 0.41 | 0.28 | 0.09 | 0.15 | 0.45 | NaN | NaN | 0.00 | 0.63 | 0.31 | 0.20 | 0.67 | 0.02 | 0.01 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| ar-es | 0.42 | 0.40 | 0.38 | 0.61 | 0.38 | 0.27 | 0.69 | 0.36 | 0.25 | 0.07 | 0.12 | 0.36 | NaN | NaN | 0.00 | 0.61 | 0.30 | 0.20 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.03 | 0.01 | 0.00 |

| ar-fr | 0.49 | 0.43 | 0.38 | 0.57 | 0.33 | 0.23 | 0.65 | 0.30 | 0.20 | 0.08 | 0.14 | 0.41 | NaN | NaN | 0.00 | 0.57 | 0.25 | 0.16 | 1.00 | 0.01 | 0.00 | 0.00 | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| ar-it | 0.47 | 0.43 | 0.39 | 0.69 | 0.37 | 0.26 | 0.71 | 0.31 | 0.20 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.71 | 0.32 | 0.21 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| ar-nl | 0.47 | 0.41 | 0.37 | 0.63 | 0.38 | 0.27 | 0.74 | 0.41 | 0.28 | 0.08 | 0.14 | 0.42 | NaN | NaN | 0.00 | 0.64 | 0.31 | 0.20 | 1.00 | 0.01 | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| ar-pt | 0.51 | 0.46 | 0.42 | 0.64 | 0.38 | 0.28 | 0.72 | 0.38 | 0.25 | 0.08 | 0.13 | 0.40 | NaN | NaN | 0.00 | 0.63 | 0.30 | 0.19 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| ar-ru | 0.41 | 0.33 | 0.28 | 0.61 | 0.25 | 0.16 | 0.77 | 0.41 | 0.28 | 0.06 | 0.09 | 0.27 | NaN | NaN | 0.00 | 0.65 | 0.23 | 0.14 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.03 | 0.01 | 0.00 |

| cn-cz | 0.45 | 0.34 | 0.27 | 0.43 | 0.20 | 0.13 | 0.72 | 0.27 | 0.17 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.56 | 0.18 | 0.10 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| cn-de | 0.46 | 0.32 | 0.25 | 0.52 | 0.26 | 0.17 | 0.71 | 0.23 | 0.13 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.64 | 0.23 | 0.14 | 1.00 | 0.01 | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| cn-en | 0.55 | 0.36 | 0.27 | 0.41 | 0.22 | 0.15 | 0.85 | 0.22 | 0.13 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.57 | 0.23 | 0.15 | 0.36 | 0.02 | 0.01 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| cn-es | 0.44 | 0.37 | 0.31 | 0.46 | 0.24 | 0.17 | 0.66 | 0.25 | 0.15 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.53 | 0.21 | 0.13 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| cn-fr | 0.46 | 0.37 | 0.31 | 0.51 | 0.24 | 0.15 | 0.70 | 0.23 | 0.14 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.60 | 0.22 | 0.13 | 0.00 | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| cn-it | 0.50 | 0.39 | 0.31 | 0.43 | 0.23 | 0.16 | 0.63 | 0.17 | 0.10 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.58 | 0.22 | 0.13 | 0.00 | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| cn-nl | 0.47 | 0.32 | 0.24 | 0.40 | 0.21 | 0.14 | 0.70 | 0.21 | 0.12 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.47 | 0.17 | 0.10 | 0.00 | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| cn-pt | 0.51 | 0.41 | 0.35 | 0.44 | 0.24 | 0.17 | 0.77 | 0.25 | 0.15 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.51 | 0.21 | 0.13 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| cn-ru | 0.49 | 0.39 | 0.32 | 0.31 | 0.19 | 0.14 | 0.73 | 0.31 | 0.19 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.36 | 0.17 | 0.11 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| cz-de | 0.51 | 0.46 | 0.43 | 0.61 | 0.39 | 0.29 | 0.70 | 0.39 | 0.27 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.71 | 0.40 | 0.28 | 1.00 | 0.02 | 0.01 | 1.00 | 0.01 | 0.00 | 1.00 | 0.13 | 0.07 | 1.00 | 0.13 | 0.07 | 0.93 | 0.13 | 0.07 | 0.00 | NaN | 0.00 |

| cz-en | 0.61 | 0.50 | 0.42 | 0.64 | 0.42 | 0.31 | 0.79 | 0.50 | 0.37 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.74 | 0.43 | 0.30 | 0.63 | 0.03 | 0.01 | NaN | NaN | 0.00 | 1.00 | 0.07 | 0.04 | 1.00 | 0.07 | 0.04 | 0.65 | 0.07 | 0.04 | 0.00 | NaN | 0.00 |

| cz-es | 0.55 | 0.55 | 0.54 | 0.64 | 0.42 | 0.32 | 0.69 | 0.39 | 0.27 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.71 | 0.42 | 0.30 | 0.75 | 0.02 | 0.01 | 1.00 | 0.01 | 0.01 | 1.00 | 0.05 | 0.02 | 1.00 | 0.05 | 0.02 | 0.82 | 0.05 | 0.02 | 0.00 | NaN | 0.00 |

| cz-fr | 0.56 | 0.51 | 0.47 | 0.64 | 0.40 | 0.29 | 0.67 | 0.40 | 0.29 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.69 | 0.37 | 0.25 | 0.50 | 0.02 | 0.01 | 1.00 | 0.01 | 0.01 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| cz-it | 0.52 | 0.50 | 0.49 | 0.77 | 0.05 | 0.03 | 0.62 | 0.33 | 0.23 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.67 | 0.02 | 0.01 | NaN | NaN | 0.00 | 1.00 | 0.05 | 0.03 | 1.00 | 0.05 | 0.03 | 0.83 | 0.05 | 0.03 | 0.00 | NaN | 0.00 |

| cz-nl | 0.59 | 0.55 | 0.52 | 0.60 | 0.42 | 0.33 | 0.72 | 0.45 | 0.33 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.69 | 0.43 | 0.31 | 1.00 | 0.02 | 0.01 | NaN | NaN | 0.00 | 1.00 | 0.08 | 0.04 | 1.00 | 0.08 | 0.04 | 0.80 | 0.08 | 0.04 | 0.00 | NaN | 0.00 |

| cz-pt | 0.54 | 0.52 | 0.51 | 0.65 | 0.43 | 0.32 | 0.72 | 0.44 | 0.32 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.72 | 0.42 | 0.30 | 0.67 | 0.01 | 0.01 | 0.75 | 0.02 | 0.01 | 1.00 | 0.11 | 0.06 | 1.00 | 0.11 | 0.06 | 0.88 | 0.11 | 0.06 | 0.00 | NaN | 0.00 |

| cz-ru | 0.57 | 0.51 | 0.47 | 0.62 | 0.42 | 0.32 | 0.75 | 0.46 | 0.33 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.72 | 0.37 | 0.25 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| de-en | 0.56 | 0.45 | 0.38 | 0.58 | 0.47 | 0.39 | 0.78 | 0.44 | 0.31 | 0.11 | 0.18 | 0.53 | 0.02 | 0.02 | 0.04 | 0.64 | 0.46 | 0.36 | 0.50 | 0.02 | 0.01 | NaN | NaN | 0.00 | 0.95 | 0.20 | 0.11 | 0.95 | 0.20 | 0.11 | 0.89 | 0.20 | 0.11 | 0.00 | NaN | 0.00 |

| de-es | 0.45 | 0.42 | 0.39 | 0.56 | 0.44 | 0.36 | 0.73 | 0.39 | 0.27 | 0.09 | 0.16 | 0.46 | 0.00 | 0.01 | 0.04 | 0.60 | 0.42 | 0.32 | 0.33 | 0.01 | 0.00 | 1.00 | 0.01 | 0.00 | 1.00 | 0.01 | 0.01 | 1.00 | 0.01 | 0.01 | 0.50 | 0.01 | 0.01 | 0.00 | NaN | 0.00 |

| de-fr | 0.50 | 0.47 | 0.43 | 0.60 | 0.46 | 0.37 | 0.76 | 0.43 | 0.30 | 0.09 | 0.15 | 0.45 | 0.00 | 0.00 | 0.01 | 0.65 | 0.45 | 0.35 | 0.67 | 0.01 | 0.01 | 1.00 | 0.01 | 0.00 | 0.89 | 0.04 | 0.02 | 0.89 | 0.04 | 0.02 | 0.75 | 0.05 | 0.02 | 0.04 | 0.01 | 0.00 |

| de-it | 0.55 | 0.49 | 0.45 | 0.56 | 0.42 | 0.34 | 0.68 | 0.36 | 0.25 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.59 | 0.40 | 0.30 | 0.00 | NaN | 0.00 | NaN | NaN | 0.00 | 1.00 | 0.05 | 0.03 | 1.00 | 0.05 | 0.03 | 0.83 | 0.05 | 0.03 | 0.00 | NaN | 0.00 |

| de-nl | 0.53 | 0.46 | 0.42 | 0.56 | 0.44 | 0.37 | 0.78 | 0.45 | 0.32 | 0.01 | 0.01 | 0.04 | 0.02 | 0.04 | 0.19 | 0.60 | 0.44 | 0.34 | 1.00 | 0.01 | 0.00 | NaN | NaN | 0.00 | 1.00 | 0.10 | 0.05 | 1.00 | 0.10 | 0.05 | 0.90 | 0.10 | 0.05 | 0.00 | NaN | 0.00 |

| de-pt | 0.53 | 0.46 | 0.41 | 0.60 | 0.46 | 0.37 | 0.70 | 0.38 | 0.26 | 0.00 | 0.00 | 0.01 | 0.00 | 0.01 | 0.04 | 0.64 | 0.46 | 0.36 | 0.50 | 0.01 | 0.00 | NaN | NaN | 0.00 | 1.00 | 0.07 | 0.04 | 1.00 | 0.07 | 0.04 | 0.87 | 0.07 | 0.04 | 0.00 | NaN | 0.00 |

| de-ru | 0.53 | 0.44 | 0.37 | 0.47 | 0.25 | 0.17 | 0.78 | 0.44 | 0.31 | 0.01 | 0.01 | 0.04 | 0.00 | 0.00 | 0.01 | 0.51 | 0.24 | 0.16 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| en-es | 0.57 | 0.45 | 0.37 | 0.56 | 0.44 | 0.37 | 0.73 | 0.44 | 0.32 | 0.01 | 0.01 | 0.04 | 0.01 | 0.01 | 0.02 | 0.59 | 0.42 | 0.33 | NaN | NaN | 0.00 | 1.00 | 0.01 | 0.01 | 1.00 | 0.03 | 0.02 | 1.00 | 0.03 | 0.02 | 0.75 | 0.03 | 0.02 | 0.00 | NaN | 0.00 |

| en-fr | 0.54 | 0.44 | 0.37 | 0.55 | 0.42 | 0.35 | 0.70 | 0.44 | 0.32 | 0.01 | 0.01 | 0.03 | 0.02 | 0.04 | 0.12 | 0.59 | 0.40 | 0.31 | NaN | NaN | 0.00 | 1.00 | 0.01 | 0.00 | 0.84 | 0.08 | 0.04 | 0.84 | 0.08 | 0.04 | 0.79 | 0.10 | 0.05 | 0.00 | NaN | 0.00 |

| en-it | 0.55 | 0.44 | 0.37 | 0.50 | 0.40 | 0.34 | 0.66 | 0.39 | 0.28 | 0.01 | 0.01 | 0.03 | 0.15 | 0.02 | 0.01 | 0.57 | 0.41 | 0.31 | NaN | NaN | 0.00 | 1.00 | 0.01 | 0.01 | 0.95 | 0.09 | 0.05 | 0.95 | 0.09 | 0.05 | 0.86 | 0.09 | 0.05 | 0.01 | 0.00 | 0.00 |

| en-nl | 0.62 | 0.50 | 0.41 | 0.55 | 0.46 | 0.39 | 0.80 | 0.54 | 0.40 | 0.01 | 0.01 | 0.04 | 0.03 | 0.06 | 0.28 | 0.60 | 0.46 | 0.37 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 1.00 | 0.12 | 0.07 | 1.00 | 0.12 | 0.07 | 0.86 | 0.13 | 0.07 | 0.00 | NaN | 0.00 |

| en-pt | 0.58 | 0.49 | 0.42 | 0.56 | 0.45 | 0.37 | 0.76 | 0.52 | 0.39 | 0.01 | 0.01 | 0.04 | 0.02 | 0.03 | 0.04 | 0.61 | 0.45 | 0.35 | NaN | NaN | 0.00 | 1.00 | 0.01 | 0.00 | 1.00 | 0.09 | 0.05 | 1.00 | 0.09 | 0.05 | 0.86 | 0.09 | 0.05 | 0.01 | 0.00 | 0.00 |

| en-ru | 0.53 | 0.40 | 0.32 | 0.55 | 0.30 | 0.21 | 0.90 | 0.48 | 0.33 | 0.01 | 0.01 | 0.03 | 0.02 | 0.04 | 0.11 | 0.65 | 0.32 | 0.21 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| es-fr | 0.50 | 0.49 | 0.47 | 0.58 | 0.46 | 0.38 | 0.69 | 0.40 | 0.28 | 0.01 | 0.01 | 0.03 | 0.00 | 0.00 | 0.01 | 0.69 | 0.18 | 0.10 | 0.78 | 0.04 | 0.02 | 1.00 | 0.01 | 0.01 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| es-it | 0.57 | 0.56 | 0.56 | 0.54 | 0.45 | 0.39 | 0.61 | 0.39 | 0.28 | 0.00 | 0.01 | 0.02 | 0.07 | 0.01 | 0.01 | 0.58 | 0.45 | 0.37 | 0.56 | 0.03 | 0.01 | 1.00 | 0.01 | 0.01 | 1.00 | 0.16 | 0.09 | 1.00 | 0.16 | 0.09 | 0.94 | 0.16 | 0.09 | 0.00 | NaN | 0.00 |

| es-nl | 0.56 | 0.54 | 0.52 | 0.56 | 0.48 | 0.43 | 0.71 | 0.40 | 0.28 | 0.00 | 0.00 | 0.01 | 0.00 | 0.00 | 0.01 | 0.60 | 0.48 | 0.40 | 1.00 | 0.01 | 0.01 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| es-pt | 0.55 | 0.54 | 0.54 | 0.54 | 0.47 | 0.42 | 0.71 | 0.45 | 0.33 | 0.01 | 0.01 | 0.04 | 0.00 | 0.00 | 0.02 | 0.59 | 0.48 | 0.41 | 0.71 | 0.03 | 0.01 | 1.00 | 0.03 | 0.02 | 0.95 | 0.20 | 0.11 | 0.95 | 0.20 | 0.11 | 0.82 | 0.20 | 0.11 | 0.00 | NaN | 0.00 |

| es-ru | 0.55 | 0.51 | 0.48 | 0.55 | 0.36 | 0.26 | 0.76 | 0.41 | 0.28 | 0.01 | 0.01 | 0.03 | 0.00 | 0.01 | 0.07 | 0.60 | 0.36 | 0.25 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| fr-it | 0.52 | 0.50 | 0.48 | 0.55 | 0.42 | 0.34 | 0.61 | 0.40 | 0.30 | 0.00 | 0.00 | 0.01 | NaN | NaN | 0.00 | 0.58 | 0.42 | 0.33 | 0.20 | 0.01 | 0.00 | 0.67 | 0.01 | 0.01 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| fr-nl | 0.52 | 0.50 | 0.49 | 0.56 | 0.46 | 0.39 | 0.71 | 0.45 | 0.33 | 0.01 | 0.01 | 0.03 | 0.00 | 0.00 | 0.03 | 0.61 | 0.47 | 0.37 | 0.75 | 0.02 | 0.01 | 1.00 | 0.01 | 0.01 | 1.00 | 0.09 | 0.05 | 1.00 | 0.09 | 0.05 | 0.90 | 0.09 | 0.05 | 0.00 | NaN | 0.00 |

| fr-pt | 0.54 | 0.51 | 0.49 | 0.59 | 0.47 | 0.39 | 0.67 | 0.40 | 0.28 | 0.01 | 0.02 | 0.05 | 0.01 | 0.01 | 0.10 | 0.61 | 0.47 | 0.38 | 0.00 | NaN | 0.00 | 1.00 | 0.02 | 0.01 | 1.00 | 0.01 | 0.01 | 1.00 | 0.01 | 0.01 | 0.50 | 0.01 | 0.01 | 0.00 | NaN | 0.00 |

| fr-ru | 0.53 | 0.49 | 0.45 | 0.53 | 0.31 | 0.22 | 0.73 | 0.36 | 0.24 | 0.01 | 0.01 | 0.04 | 0.00 | 0.01 | 0.03 | 0.59 | 0.32 | 0.22 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| it-nl | 0.51 | 0.49 | 0.47 | 0.54 | 0.45 | 0.38 | 0.63 | 0.36 | 0.25 | 0.01 | 0.01 | 0.03 | 0.11 | 0.02 | 0.01 | 0.59 | 0.45 | 0.37 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 1.00 | 0.06 | 0.03 | 1.00 | 0.06 | 0.03 | 0.85 | 0.06 | 0.03 | 0.00 | NaN | 0.00 |

| it-pt | 0.54 | 0.54 | 0.53 | 0.50 | 0.42 | 0.36 | 0.62 | 0.39 | 0.28 | 0.01 | 0.01 | 0.03 | NaN | NaN | 0.00 | 0.61 | 0.49 | 0.41 | NaN | NaN | 0.00 | 0.80 | 0.02 | 0.01 | 0.97 | 0.17 | 0.09 | 0.97 | 0.17 | 0.09 | 0.92 | 0.17 | 0.09 | 0.00 | NaN | 0.00 |

| it-ru | 0.50 | 0.47 | 0.44 | 0.54 | 0.35 | 0.26 | 0.68 | 0.34 | 0.22 | 0.00 | 0.01 | 0.02 | NaN | NaN | 0.00 | 0.62 | 0.37 | 0.26 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| nl-pt | 0.57 | 0.57 | 0.57 | 0.60 | 0.50 | 0.43 | 0.70 | 0.45 | 0.33 | 0.00 | 0.01 | 0.02 | 0.00 | 0.00 | 0.02 | 0.62 | 0.50 | 0.42 | 0.67 | 0.01 | 0.01 | NaN | NaN | 0.00 | 1.00 | 0.06 | 0.03 | 1.00 | 0.06 | 0.03 | 0.86 | 0.06 | 0.03 | 0.00 | NaN | 0.00 |

| nl-ru | 0.57 | 0.50 | 0.45 | 0.57 | 0.39 | 0.29 | 0.79 | 0.46 | 0.33 | 0.01 | 0.01 | 0.03 | 0.00 | 0.01 | 0.09 | 0.64 | 0.40 | 0.29 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

| pt-ru | 0.50 | 0.45 | 0.41 | 0.54 | 0.34 | 0.24 | 0.75 | 0.47 | 0.34 | 0.00 | 0.01 | 0.02 | 0.00 | 0.00 | 0.03 | 0.59 | 0.34 | 0.24 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | NaN | NaN | 0.00 | 0.00 | NaN | 0.00 | 0.00 | NaN | 0.00 |

NaN: division per zero, likely due to empty alignment.

We counted with an increasing number of participants with respect to the last campaign. 7 systems implemented cross-lingual strategies. For most of them, it involves integrating one translation step before the matching itself. Only 2 of them have been specific designed to the task.

However, while some systems implementing cross-lingual strategies were not able to fully deal with the difficulties of the task, some others were not able to complete many tasks due to internal errors, what is also the case for some non-specific systems.

From 23 participants, half of them have evaluated in this track.

In terms of performance and stability, AML and LogMap keep their positions with respect to the previous campaigns, followed this year by the new systems CroLOM-Lite and SimCat-Lite, the newcomers.

[1] Christian Meilicke, Raul Garcia-Castro, Fred Freitas, Willem Robert van Hage, Elena Montiel-Ponsoda, Ryan Ribeiro de Azevedo, Heiner Stuckenschmidt, Ondrej Svab-Zamazal, Vojtech Svatek, Andrei Tamilin, Cassia Trojahn, Shenghui Wang. MultiFarm: A Benchmark for Multilingual Ontology Matching. Accepted for publication at the Journal of Web Semantics.

An authors version of the paper can be found at the MultiFarm homepage, where the data set is described in details.

This track is organized by Cassia Trojahn dos Santos. If you have any problems working with the ontologies, any questions or suggestions, feel free to write an email to cassia [.] trojahn [at] irit [.] fr.